Optimizing Cost-Efficiency in LLM Serving with Heterogeneous GPUs

A Comprehensive Guide

The rise of Large Language Models (LLMs) in various applications like natural language processing, machine learning, and AI-driven solutions has reshaped how businesses approach artificial intelligence. With their growing popularity, the demand for cost-efficient solutions to deploy these massive models efficiently has become more critical than ever.

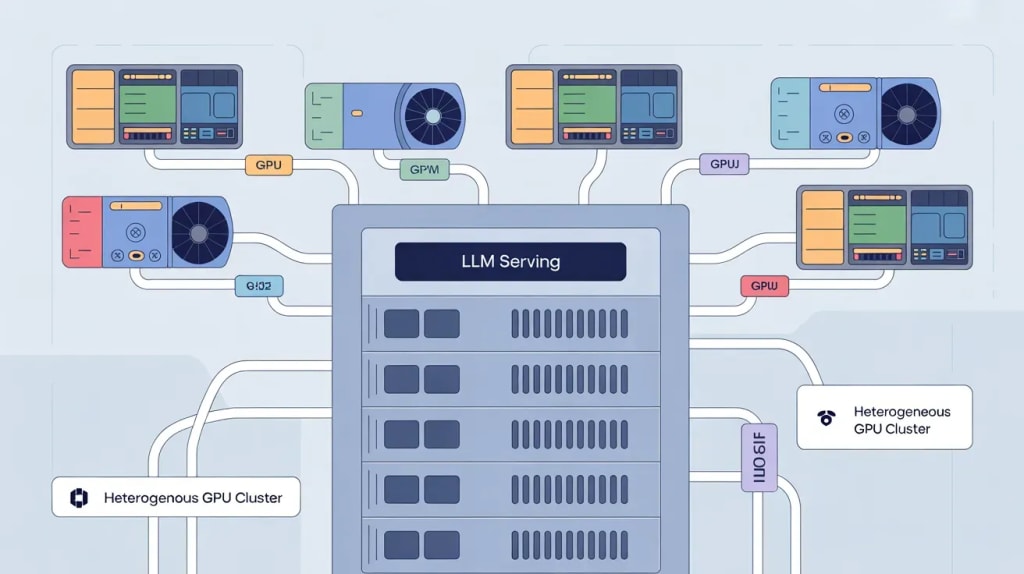

Serving LLMs over heterogeneous GPUs is a promising strategy to achieve both high performance and cost-efficiency. This article dives deep into the mechanisms behind serving LLMs over heterogeneous GPUs, offering insights into optimizing resource allocation, reducing operational costs, and ensuring peak performance in AI workloads.

What Are Heterogeneous GPUs, and Why Are They Key to Efficient LLM Serving?

Heterogeneous GPUs involve using multiple types of GPUs within the same computing environment, where each GPU has distinct capabilities. These differences may include processing power, memory bandwidth, or energy efficiency.

The concept behind using heterogeneous GPUs for LLM deployment is to allocate specific tasks to the most suitable GPU based on its strengths, ensuring optimal resource utilization.

LLMs, especially the large-scale ones used in advanced AI applications, require significant computational resources. By leveraging a diverse set of GPUs, organizations can execute tasks like training, inference, and data preprocessing in a way that reduces costs and maximizes performance.

This setup allows businesses to tap into the benefits of both high-performance GPUs for demanding tasks and more affordable GPUs for simpler workloads.

How to Maximize Cost-Efficiency in LLM Serving Using Heterogeneous GPUs

Achieving cost-efficiency when serving LLMs over heterogeneous GPUs requires strategic planning and thoughtful implementation. Below are key techniques that can help businesses optimize their GPU usage, cut costs, and ensure consistent performance across their AI operations.

1. Task-Specific GPU Allocation for Maximum Efficiency

Allocating tasks based on the strengths of each GPU is one of the most effective ways to maximize cost-efficiency. High-end GPUs, such as the NVIDIA A100 or Tesla V100, excel in handling complex training tasks that demand intensive computational resources. These GPUs are designed to process massive amounts of data quickly, making them ideal for LLM training.

On the other hand, GPUs like the NVIDIA T4, which are more energy-efficient and less powerful, can be assigned to simpler tasks such as running inference models or performing data preprocessing.

By leveraging this selective allocation approach, businesses avoid over-provisioning high-performance GPUs for tasks that don’t require them, resulting in significant cost savings.

2. Dynamic Resource Scaling Based on Demand

Dynamic scaling is a technique where the number of GPUs in use is adjusted based on real-time demand. This practice ensures that resources are allocated in a way that matches the workload at any given time.

During peak times, more GPUs can be activated to handle high-demand tasks such as LLM training. Conversely, during off-peak hours or lower-demand periods, unnecessary GPUs can be powered down, reducing operational costs.

This flexibility allows businesses to scale their GPU resources in a way that aligns with real-world usage, ensuring that they don’t spend money on idle hardware. Dynamic scaling is particularly useful for AI environments where usage fluctuates depending on factors like task complexity and data volume.

3. GPU Virtualization for Optimized Resource Utilization

GPU virtualization is a powerful technique that allows multiple tasks to run simultaneously on a single GPU. In a heterogeneous environment, this can be extremely beneficial as it maximizes the utilization of each GPU, ensuring that no hardware sits idle.

Virtualization enables multiple instances of a model to share GPU resources, improving throughput and reducing the need for additional physical GPUs.

In the context of LLM serving, GPU virtualization can allow different parts of the LLM to run on separate virtual instances, making the most of the available computational power. This not only improves the cost-effectiveness of using GPUs but also enhances the overall efficiency of AI model execution.

4. Energy-Efficient GPUs for Non-Essential Tasks

Energy consumption is a significant factor in the total cost of running LLMs, especially when dealing with high-performance GPUs that consume a lot of power. One way to reduce energy costs is by using energy-efficient GPUs, such as the NVIDIA T4 or A40, for tasks that don’t require high computational power. These tasks may include light inference or running pre-trained models for simple applications.

By selecting the right GPU for each task, businesses can keep their energy usage low without sacrificing performance. For example, using a high-performance GPU for training and a more power-efficient GPU for inference ensures that the system remains cost-effective and energy-efficient at the same time.

5. Optimizing GPU Performance with Specialized Frameworks

To fully optimize the performance of heterogeneous GPUs, businesses can leverage GPU-accelerated libraries and AI frameworks. Tools such as CUDA, TensorFlow, and PyTorch are optimized to run on GPUs, allowing organizations to get the most out of their hardware.

These frameworks are designed to distribute workloads across GPUs efficiently, ensuring that each task is completed in the least amount of time possible.

GPU-optimized libraries help reduce the computational load on each GPU, leading to faster processing times and more efficient resource allocation. By using these frameworks, businesses can minimize the need for additional GPUs, thereby reducing costs and improving overall system performance.

Addressing the Challenges of Heterogeneous GPU Serving

While there are numerous benefits to serving LLMs over heterogeneous GPUs, some challenges need to be addressed:

Complex Setup and Management: Configuring and managing a system with multiple types of GPUs can be more complex than using a single type of GPU. Organizations must ensure that workloads are properly allocated and that the different GPUs can work together seamlessly.

Compatibility Issues: Not all software frameworks or LLM models are optimized for heterogeneous GPU environments. Ensuring compatibility between hardware and software is essential for avoiding performance bottlenecks or inefficiencies.

Resource Scheduling: Effective resource scheduling is critical in ensuring that each GPU is fully utilized and that no resources are wasted. Without proper monitoring and scheduling, some GPUs may go underutilized, leading to higher operational costs.

Conclusion: The Future of LLM Serving with Heterogeneous GPUs

Serving LLMs over heterogeneous GPUs represents a significant opportunity to reduce costs while maintaining high performance. By implementing strategies such as task-specific allocation, dynamic scaling, GPU virtualization, and utilizing energy-efficient hardware, businesses can optimize their AI operations to be both cost-effective and high-performing.

Despite some challenges in managing heterogeneous environments, the long-term savings and performance improvements make it a worthwhile investment. As AI workloads continue to grow, the use of heterogeneous GPUs will be a crucial factor in ensuring that LLM serving remains affordable and scalable for organizations worldwide.

FAQs

What are the benefits of using heterogeneous GPUs for LLM serving?

Heterogeneous GPUs allow for more efficient resource allocation, reducing costs and improving the performance of AI models by leveraging the strengths of different GPUs.

How does dynamic scaling help reduce costs?

Dynamic scaling adjusts the number of GPUs in use based on demand, ensuring businesses only pay for the resources they need at any given moment.

What is GPU virtualization, and how does it optimize resource usage?

GPU virtualization enables multiple tasks to run simultaneously on a single GPU, maximizing hardware utilization and reducing the need for additional physical GPUs.

How can energy-efficient GPUs lower operational costs?

Energy-efficient GPUs consume less power for non-intensive tasks, significantly reducing energy costs without compromising system performance.

Why are GPU-accelerated frameworks important for LLM serving?

GPU-optimized frameworks like TensorFlow and PyTorch help streamline GPU performance, ensuring faster processing times and better utilization of available resources.

Find this article informative? Click here to read our more informative blogs

About the Creator

Backlinks Cart

Mohsin (CEO BacklinksCart Agency).

Check Our Agency Backlinks Platfrom " Backlinkscart.com"

For any edits or link insertions. Whatsapp" +92 309-1821105"

Gmail: [email protected]

Comments

There are no comments for this story

Be the first to respond and start the conversation.